Tiff file format for pre-processed quad-stereo sets

From ElphelWiki

Image sets

Example tree of a single set:

1527256903_350165/

├── 1527256903_350165.kml

├── jp4 (source files directory)

│ ├── 1527256903_350165_0.jp4

│ ├── ...

│ ├── 1527256903_350165_3.jp4

│ ├── 1527256903_350165_4.jp4

│ ├── ...

│ └── 1527256903_350165_7.jp4

├── rating.txt

├── thumb.jpeg

└── v03 (model version)

├── 1527256903_350165-00-D0.0.jpeg

├── ...

├── 1527256903_350165-07-D0.0.jpeg

├── 1527256903_350165.corr-xml

├── 1527256903_350165-DSI_COMBO.tiff

├── 1527256903_350165-EXTRINSICS.corr-xml

├── 1527256903_350165-img1-texture.png

├── ...

├── 1527256903_350165-img2001-texture.png

├── 1527256903_350165-img_infinity-texture.png

├── 1527256903_350165.mtl

├── 1527256903_350165.obj

├── 1527256903_350165.x3d

└── ml (directory with processed data files for ML)

├── 1527256903_350165-ML_DATA-08B-O-FZ0.05-OFFS-2.00000.tiff

├── 1527256903_350165-ML_DATA-08B-O-FZ0.05-OFFS-1.00000.tiff

├── 1527256903_350165-ML_DATA-08B-O-FZ0.05-OFFS0.00000.tiff

├── 1527256903_350165-ML_DATA-08B-O-FZ0.05-OFFS1.00000.tiff

└── 1527256903_350165-ML_DATA-08B-O-FZ0.05-OFFS2.00000.tiff

where:

- *.jp4 - source files, 0..3 - quad stereo camera #1, 4..7 - quad stereo camera #2, and so on if there are more cameras in the system.

- *-D0.0.jpeg - disparity = 0, images are undistorted to a distortion polynom common for each image

- *.corr-xml - ImageJ plugin's settings file?

- *-DSI_COMBO.tiff - Disparity Space Image - tiff stack

- *-EXTRINSICS.corr-xml - extrinsic parameters of the multicamera system

- *.x3d, *.png - X3D format model with textures. The textures are shared with the OBJ format model

- *.obj, *.mtl, *.png - OBJ format model with textures

- *.tiff - TIFF stack of pre-processed images for ML

- *.kml, rating.txt, thumb.jpeg - files, related to the online viewer only

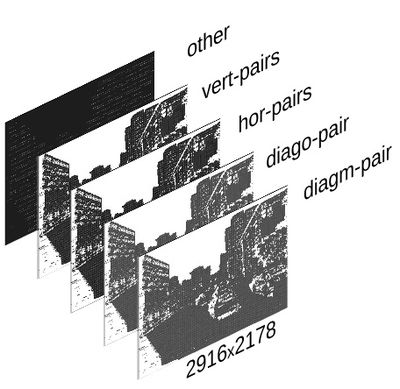

TIFF image stacks for ML

- The TIFF stack format is described in the presentation for CVPR2018, pp.19-21:

- 8 bpp

- layer dimensions 2916x2178

- 5 layers in the stack: [diagm-pair, diago-pair, hor-pairs, vert-pairs, other]

- diagm-pair

- diago-pair

- hor-pairs

- vert-pairs

- other - encoded values: estimated disparity, estimated+residual disparity, and confidence for the residual disparity

- The layers are tiled - tiles' wxh is read from the header - currently it's 9x9, making it 324x242 tiles total.

- The values in the other layer are encoded per tile.

- Essentially it’s a normal TIFF image stack with several headers modified:

- ImageDescription (tag[270]) contains a string with info about number of images, slices

- tag[50838] – a list of layers names’ lengths

- tag[50839] – binary string with encoded layers labels and extra info

- The source files are processed using a plugin for ImageJ, the output file for each set is a tiff stack

- There are a few ways to view the stack:

ImageJ

ImageJ (or Fiji) - it has a native support for stacks, each stack has a name label stored (along with related xml info) in the ImageJ tiff tags. To read tiff tags in ImageJ, go Image > Show Info...

Python

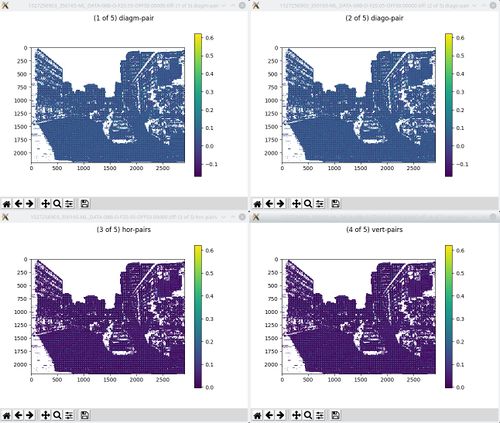

1. Use imagej_tiff.py from python3-imagej-tiff to:

- Get tiff tags values (Pillow)

- Parse Properties xml data stored by ImageJ in the tiff tags

- Get tiles dimensions from Properties

- Convert layers to numpy arrays (split into tiles or optionally not split) for further computations and plotting

Example (more examples are commented out in the __main__ section of the script):

~$ python3 imagej_tiff.py 1527256903_350165-ML_DATA-08B-O-FZ0.05-OFFS0.00000.tiff

time: 1531344391.7055812

time: 1531344392.5336654

TIFF stack labels: ['diagm-pair', 'diago-pair', 'hor-pairs', 'vert-pairs', 'other']

<?xml version="1.0" ?>

<properties>

<ML_OTHER_TARGET>0</ML_OTHER_TARGET>

<tileWidth>9</tileWidth>

<disparityRadiusMain>257.22231560274076</disparityRadiusMain>

<comment_ML_OTHER_GTRUTH_STRENGTH>Offset of the ground truth strength in the "other" layer tile</comment_ML_OTHER_GTRUTH_STRENGTH>

<data_min>-0.16894744988183344</data_min>

<comment_intercameraBaseline>Horizontal distance between the main and the auxiliary camera centers (mm). Disparity is specified for the main camera</comment_intercameraBaseline>

<ML_OTHER_GTRUTH>2</ML_OTHER_GTRUTH>

<data_max>0.6260986600450271</data_max>

<disparityRadiusAux>151.5308819757923</disparityRadiusAux>

<comment_disparityRadiusAux>Side of the square where 4 main camera subcameras are located (mm). Disparity is specified for the main camera</comment_disparityRadiusAux>

<comment_disparityRadiusMain>Side of the square where 4 main camera subcameras are located (mm)</comment_disparityRadiusMain>

<comment_dispOffset>Tile target disparity minum ground truth disparity</comment_dispOffset>

<comment_tileWidth>Square tile size for each 2d correlation, always odd</comment_tileWidth>

<comment_data_min>Defined only for 8bpp mode - value, corresponding to -127 (-128 is NaN)</comment_data_min>

<comment_data_max>Defined only for 8bpp mode - value, corresponding to +127 (-128 is NaN)</comment_data_max>

<comment_ML_OTHER_TARGET>Offset of the target disparity in the "other" layer tile</comment_ML_OTHER_TARGET>

<VERSION>1.0</VERSION>

<dispOffset>0.0</dispOffset>

<comment_ML_OTHER_GTRUTH>Offset of the ground truth disparity in the "other" layer tile</comment_ML_OTHER_GTRUTH>

<ML_OTHER_GTRUTH_STRENGTH>4</ML_OTHER_GTRUTH_STRENGTH>

<intercameraBaseline>1256.0</intercameraBaseline>

</properties>

Tiles shape: 9x9

Data min: -0.16894744988183344

Data max: 0.6260986600450271

(2178, 2916, 5)

Stack of images shape: (242, 324, 9, 9, 4)

time: 1531344392.7290232

Stack of values shape: (242, 324, 3)

time: 1531344393.5556033

Upon opening tiff the image will be a numpy array of shape (height,width,layer) - for further processing it is reshaped to (height_in_tiles, width_in_tiles, tile_height, tile_width, layer), example:

original tiff stack shape: (2178, 2916, 5) image data: (242, 324, 9, 9, 4) values data: (242, 324, 3)

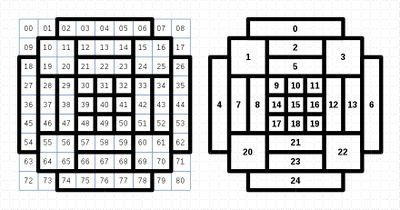

2. Use pack_tile.py from python3-imagej-tiff to:

- Pack image tiles for better structuring for ML

# The current packing is hardcoded in the script: (TY,TX,9,9,4) -> (TY,TX,100) # Get tiles from a tiff stack import pack_tile as pile packed_tiles = pile.pack(tile) # packed_tiles get stacked with estimated disparity (see test_nn_feed.py) packed_tiles = np.dstack((packed_tiles,values[:,:,0]))